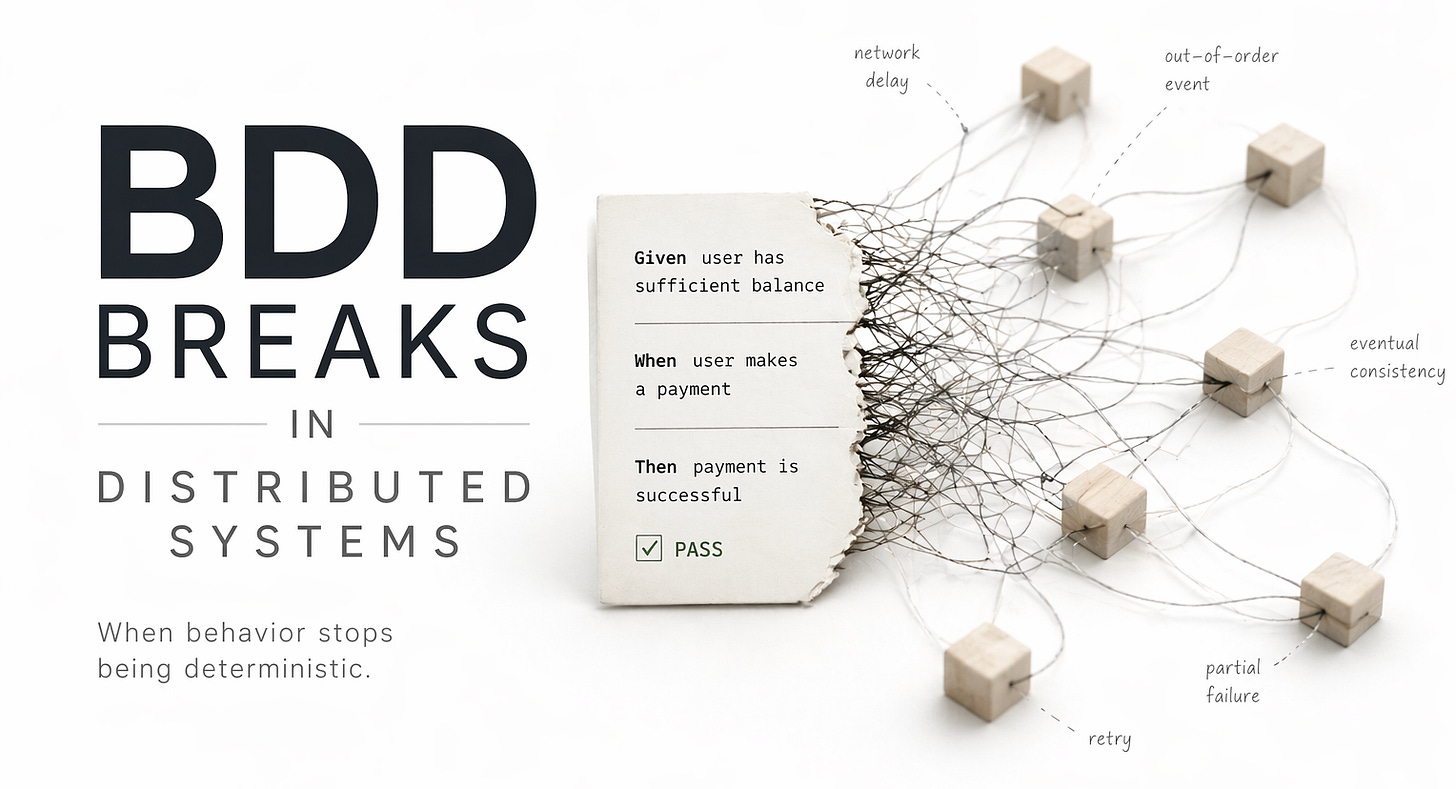

BDD(Behavior-Driven Development) Breaks in Distributed Systems

Given/When/Then assumes a deterministic world. Distributed systems don't have one.

Here is a scenario that looks perfectly reasonable:

Given a user has $100 in their account

When they transfer $60 to another user

Then their balance should be $40

Clean. Readable. Everyone agrees. Stakeholders understand it, QA signs off, and it passes locally every time. Then you run it in a real system. There’s a message broker. Two payment services. A database replicating across availability zones. Now it starts failing. Not consistently, maybe one in twenty runs.

Sometimes the balance is still $100 because the read hit a replica that hasn’t caught up yet. Sometimes the transfer runs twice because a consumer retried after a timeout. Sometimes the API returns 200 OK, but the debit hasn’t happened yet; it’s still in a queue. Nothing about the business rule changed. The logic is still correct.

What broke is the meaning of “Then”.

What BDD Was Actually Selling

BDD came from a real problem. Unit tests could verify code, but they couldn’t tell if the system matched business intent. Dan North’s idea was to close that gap by describing behavior in a way everyone could read and agree on.

The goal wasn’t test coverage. It was a shared understanding. And in the systems BDD grew up in, that worked. A request comes in, something happens, and a response goes out. The system is mostly synchronous, and side effects are immediate.

When you say, “Then the balance should be $40,” you mean something very concrete: the request finishes, you check the database, and the value is there. Simple. Direct. Mostly true. If your system still behaves like that, BDD holds up fine.

The problems start when you carry that assumption into systems that don’t.

In practice, BDD scenarios drift. They grow. Someone maintains them. They pass in CI and fail in staging. Eventually, someone adds a sleep(500) to “stabilize” things. At that point, the scenarios are no longer describing the system. They’re describing a simplified version of it, one that behaves nicely, responds immediately, and never surprises you.

That version doesn’t exist in production.

Now you’re paying the cost of maintaining human-readable specs and getting false confidence in return.

The Determinism That BDD Requires

Every Given/When/Then scenario makes an implicit contract: given this exact state, when this exact thing happens, then this exact outcome will follow.

That contract depends on determinism. The system has to be in a known state before the action, the action has to produce a predictable sequence of effects, and the result has to be observable at the moment you assert it.

This is not an implementation detail. It is structural. The format itself has no way to express statements like “Then the balance will eventually be $40” or “Then at least one of these outcomes will happen.” It assumes a single snapshot in time and assumes that the snapshot is meaningful.

In a monolithic system with synchronous execution and strong transactional guarantees, that assumption holds. In most systems being built today, it does not.

How Distributed Systems Actually Work

A distributed system does not process a request. It processes a chain of events, partial states, concurrent operations, and compensating actions that together produce an outcome over time. That outcome may not be visible immediately, and in some cases may not become visible at all.

Consider the same payment scenario. A user submits a transfer. The API validates the request and publishes a transfer_requested event. A service consumes that event and debits the sender, then emits a sender_debited event. Another service credits the recipient. A third service updates a read model that the balance API queries.

Each step can fail independently. Each can be retried. Each can execute out of order. The read model can lag behind the write path by milliseconds or seconds, depending on load. At what point is the “Then” evaluated?

Immediately after the HTTP response, when the transfer is still in-flight

After a fixed delay, which is effectively a guess

By polling until the condition becomes true, which turns the scenario into a different kind of test

Partial failure makes this harder. In a real system, success is not binary. The debit may succeed while the credit fails. A saga may roll the debit back. A dead-letter queue may hold the credit event for later recovery. From the outside, the request returns 200. Inside the system, the money may be in an intermediate state that no single BDD scenario can describe.

Concurrency introduces another layer of complexity. Two transfers initiated at the same time against the same account may both read the same balance, both pass validation, and both attempt to debit, resulting in an overdraft that no isolated scenario would predict.

BDD evaluates scenarios in isolation. Distributed systems fail through interaction.

Eventual consistency is not a bug. It is a deliberate tradeoff that allows systems to scale. BDD, however, assumes strong consistency at the moment of assertion. These two assumptions are fundamentally incompatible.

The Gap Between Scenario and System

When BDD scenarios describe a distributed system, they tend to fall into one of three patterns. All of them are problematic. The first is abstraction. The scenario describes a system that does not exist.

It talks to a facade that synchronously resolves all the asynchronous work before returning. The test passes, but it is not testing the real system. It is testing a simulation that removes exactly the properties you need to be confident about in production. The second is brittleness.

The scenarios exercise the real system, but rely on timing assumptions, polling, or retry logic hidden inside step definitions. They start to flap. Failures show up with no clear cause. Someone investigates, finds no real bug, and marks it as flaky. Over time, the suite stays green, but nobody actually trusts it. The third is incompleteness.

The scenarios cover the happy path and a few obvious errors, but the interesting failure modes never make it in. Nobody writes something like:

Given a transfer is in-flight when the credit service goes down

Then the saga coordinator retries the credit within 30 seconds

And the read model is consistent within 60 seconds

That scenario is both true and important. It is also awkward to write, hard to automate, and not readable for non-technical stakeholders. So it gets skipped. The format pushes you toward scenarios that are easy to express in BDD, not scenarios that actually capture the risk surface of the system.

AI Systems Make This Untenable

If distributed systems stretch BDD to its limits, AI systems break it completely. A large language model does not produce deterministic output. Given the same input, it can return different responses across runs. A recommendation system shifts as models are retrained. An anomaly detection system changes behavior as the definition of “normal” evolves. So what does a BDD scenario even mean here?

Given a user asks "What is the capital of France?"

When the model processes the request

Then the response should be "Paris"This might pass today. It might fail after the next fine-tuning run. Even if it passes, it tells you almost nothing about the system’s quality. The real questions are not about exact answers. They are about distributions:

How often is the model factually wrong?

How often does latency exceed acceptable thresholds?

How does quality degrade at the edges of the input space?

None of these fit into a Given/When/Then structure. The same issue appears in any system driven by embeddings, feedback loops, or learned features. The output is no longer just a function of the input. It depends on:

model weights

training data

feature pipelines

accumulated distribution shift

At that point, an assertion like “Then the recommendation should be X” is no longer meaningful.

What Actually Works

None of this means you stop specifying system behavior. It means you choose tools that match the actual properties of the system you are building.

Invariants over exact outcomes.

Instead of asserting that a specific balance is reached, assert what must always hold. The sum of all balances remains constant. No account goes below zero without an overdraft agreement. Every transfer eventually results in either a completed credit or a compensating action. These statements survive timing, retries, and partial failure. They describe the system as it really behaves, not as we wish it behaved.

Contract testing at boundaries.

In distributed systems, the useful question is not “does the system do X end-to-end,” but “does service A honor what service B depends on.” Tools like Pact let you verify these contracts independently. This gets you closer to BDD’s original goal, shared understanding, without requiring unrealistic end-to-end determinism.

Tolerance windows instead of exact assertions.

Eventual consistency forces you to define how eventual is acceptable. Saying “the read model reflects the write within 500ms under normal load” is a real requirement. Saying “the balance is immediately updated after the request” is not, at least not in a system built on asynchronous replication.

Chaos and property-based testing.

The interesting scenarios are not happy paths. They are failure modes. What happens when a consumer is slow? When is a service unavailable? When messages are duplicated? Property-based testing lets you say “this invariant must always hold” and then actively search for the inputs that break it.

Observability as a requirement.

In production, systems are understood through metrics, traces, and logs, not pass/fail assertions. Being able to detect “this saga failed to complete within its SLA” is often more valuable than a suite of BDD scenarios that only pass when everything behaves nicely.

For AI systems, evaluation replaces assertion.

You define metrics. You build datasets that represent real inputs. You track how the system performs over time. A regression is not “this scenario failed.” A regression is “quality dropped 4% this week, concentrated in this category.”

The Right Tool for the Complexity You Have

BDD is not a bad idea. For systems with clear business rules, synchronous flows, and a need for shared understanding between product and engineering, it still works. It forces useful conversations. It creates readable documentation.

The problem is not BDD itself. It is where we apply it.

A tool that works for a monolith does not automatically work for a system of multiple services communicating over a message bus. A testing model built on deterministic, synchronous assertions does not translate cleanly into a system where timing varies, outcomes are probabilistic, and failure is expected.

Engineers who have lived through this recognize the pattern. The BDD suite grows. Flakiness grows with it. Trust in the tests declines. Eventually, the tests remain in CI, but nobody really believes them.

And when production incidents happen, they happen between scenarios. In the failure modes nobody wrote down. The issue is not that we lack better tools. We already have them: invariant testing, contract testing, chaos engineering, property-based testing, SLOs, and observability. The issue is inertia.

BDD worked in simpler systems, so it keeps getting applied even when the system has outgrown it. It feels familiar. The tooling is mature. It is easy to keep doing the same thing. But every hour spent maintaining scenarios that assume a synchronized, deterministic system is an hour not spent understanding how the system actually fails. That is what breaks in distributed systems.

Not BDD as a format, but the assumption behind it: that behavior can be fully captured as a set of discrete, deterministic, human-readable scenarios. In systems where behavior is emergent, time-dependent, and often probabilistic, that assumption does not hold. And building confidence on something that does not hold is how you end up with a green build and a production incident.